Voice-Controlled Drone Using a Fine-Tuned Large Language Model (LLM)

System Demonstration

Drone in Action (Gazebo)

Drone Running via Python Script

Overview

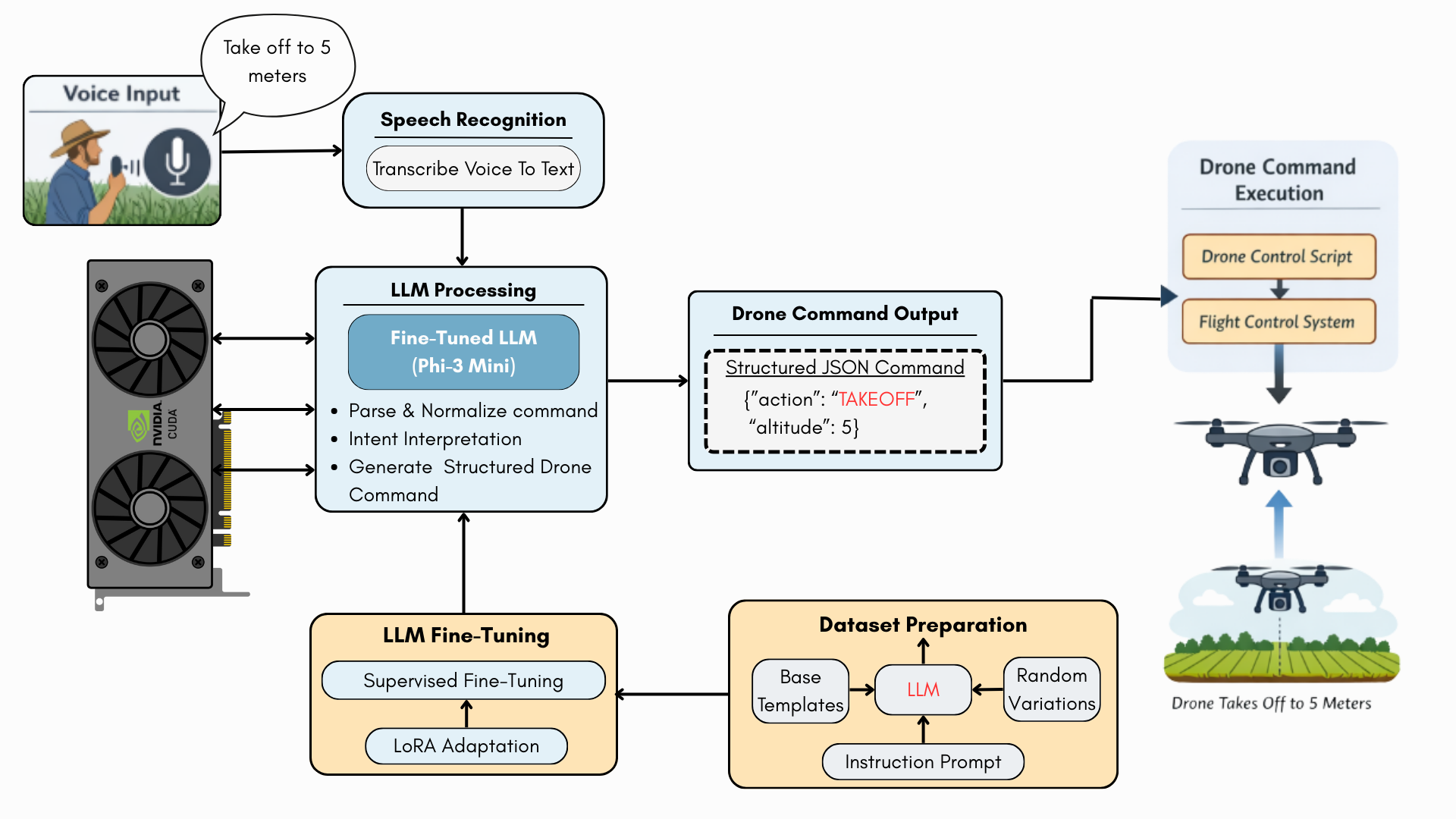

This project presents a voice-controlled unmanned aerial vehicle (UAV) system powered by a fine-tuned Large Language Model (LLM) that enables robust, natural-language-based drone control in complex real-world environments. Instead of relying on rigid command formats, the system interprets human intent from diverse, noisy, and ambiguous voice commands and converts them into structured drone actions.

The proposed framework is designed to be application-agnostic, making it suitable for use in precision agriculture, industrial inspection sites, construction and civil engineering projects, surveying and mapping operations, and search-and-rescue or disaster-response scenarios.

Motivation

Traditional drone control systems depend on either manual joystick input or strictly formatted voice commands. However, in real operational environments, operators often have occupied hands, situations demand fast intuitive interaction, commands vary significantly between users, and background noise is common.

For example, the same drone action may be expressed as “Take off to 5 meters”, “Go up 5 meters”, or “Launch and climb 5 m”. Conventional NLP pipelines struggle to handle such linguistic variability.

Objectives

- Enable natural language voice commands for drone operation

- Translate unstructured speech into structured drone control commands

- Handle linguistic variability, paraphrasing, and partial ambiguity

- Improve robustness under noisy field conditions

- Provide a scalable control framework applicable across multiple domains

Challenges in Real-World Drone Operation

Drones are increasingly used in environments where operators may be walking, climbing, or inspecting structures, visual attention is divided, and immediate decision-making is required.

These conditions expose the limitations of rule-based or traditional NLP-based voice control systems.

Proposed Solution: LLM-Based Voice-Controlled Drone System

This work proposes the use of a fine-tuned LLM to directly model the semantic intent behind voice commands, rather than relying on surface-level keyword matching.

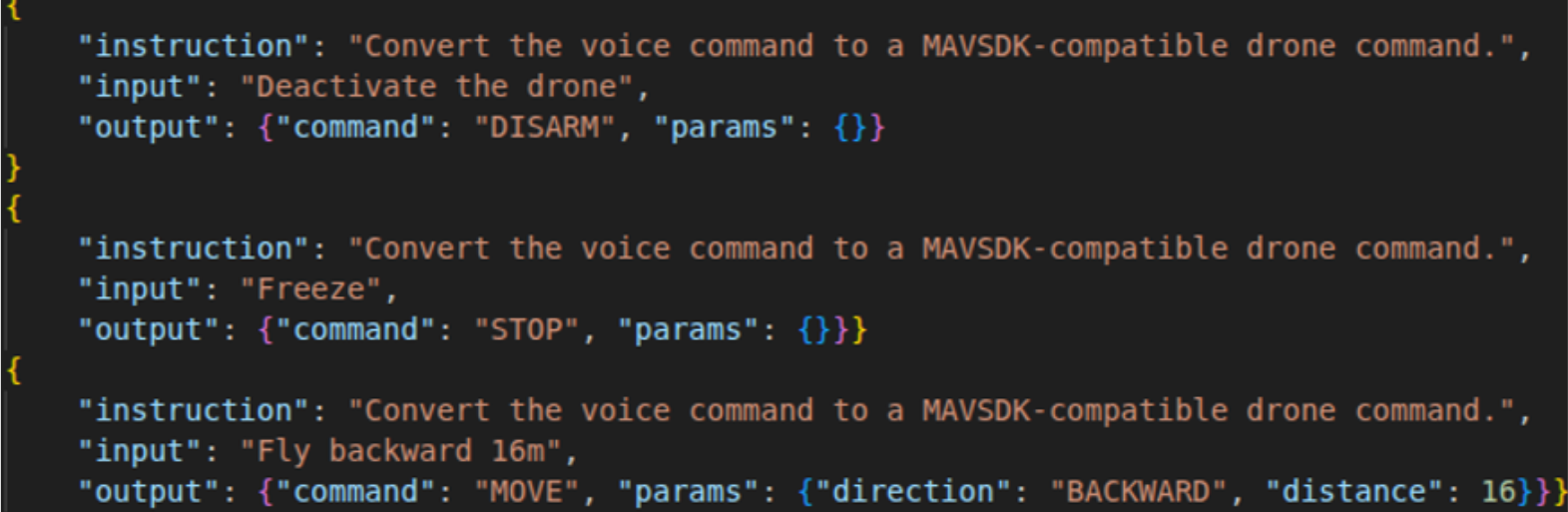

Dataset Preparation (LLM-Assisted)

The dataset covers seven fundamental drone actions: ARM, DISARM, TAKEOFF, LAND, MOVE, RTL, and STOP.

The dataset is generated using base command templates, LLM-based paraphrasing with DistilGPT-2, parameter randomization, and instruction-style JSON labeling.

LLM Fine-Tuning Pipeline

The base model used is Phi-3 Mini (3.8B parameters), selected due to its strong instruction-following capability and ability to operate within 8GB GPU constraints.

Fine-tuning was performed using supervised fine-tuning with LoRA, 4-bit quantization, and optimized training settings to prevent overfitting.

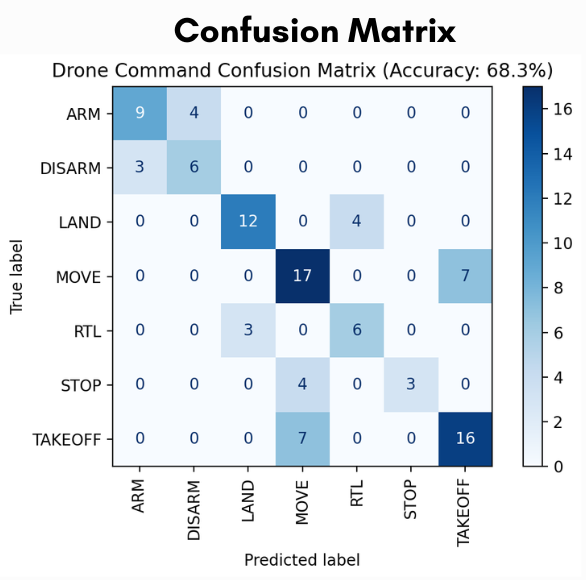

Model Evaluation

The model achieved an overall accuracy of 68.3% across seven command classes. Strong performance was observed for LAND, MOVE, and TAKEOFF, while safety-critical commands such as STOP showed confusion with MOVE.

System Testing and Deployment

The system was validated in a Gazebo simulation environment, confirming voice-to-command translation, structured command generation, and Python-based control integration.

Real-drone deployment was not performed due to flight controller constraints; however, the architecture is fully transferable to physical UAV platforms.

Significance and Future Work

This work demonstrates the feasibility of LLM-driven voice control for UAVs operating in complex, real-world environments.

- Safety-aware command classification

- Multilingual command support

- Real-drone deployment

- Onboard inference optimization

- Integration with vision-based decision systems